-

North and Central America

Can't find your location? Visit our global site in English.

-

South America

Can't find your location? Visit our global site in English.

- Asia

-

Oceania

Can't find your location? Visit our global site in English.

-

Europe

- Austria - Deutsch

- Belgium - English Français

- Czech Republic - Česky

- Finland - Suomalainen

- France - Français

- Germany - Deutsch

- Italy - Italiano

- Netherlands - Nederlands

- Poland - Polski

- Portugal - Português

- Romania - Română

- Spain - Español

- Switzerland - Deutsch Français Italiano

- Sweden - Svenska

- Turkey - Türkçe

- United Kingdom - English

- Kazakhstan - Русский

- Africa and Middle East

Build smarter and scalable solutions without added complexity

Metadata is the foundation for intelligence from video. Alongside the video stream, Axis AI-powered cameras deliver accessible, real-time scene metadata, that is organized, labeled and standardized for easy integration.

Whether you're building for the edge, cloud, or hybrid environments, AXIS Scene Metadata lays the foundation for faster development, simplified scaling and real-time automation.

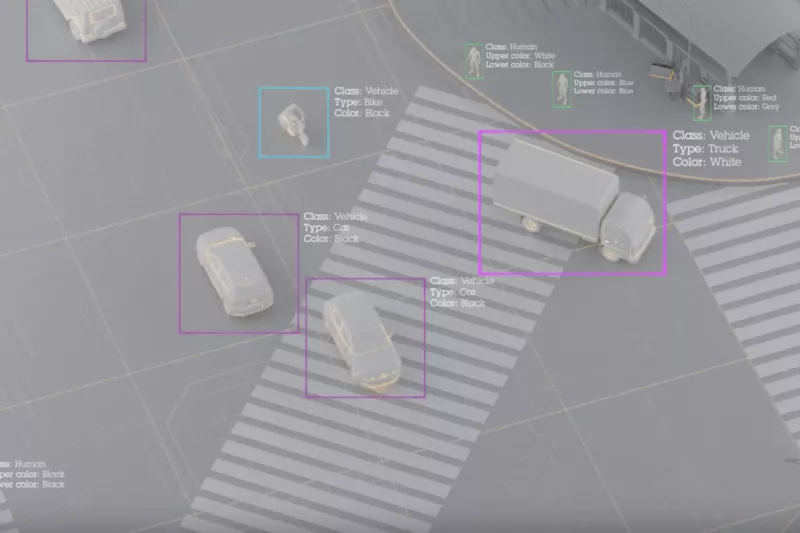

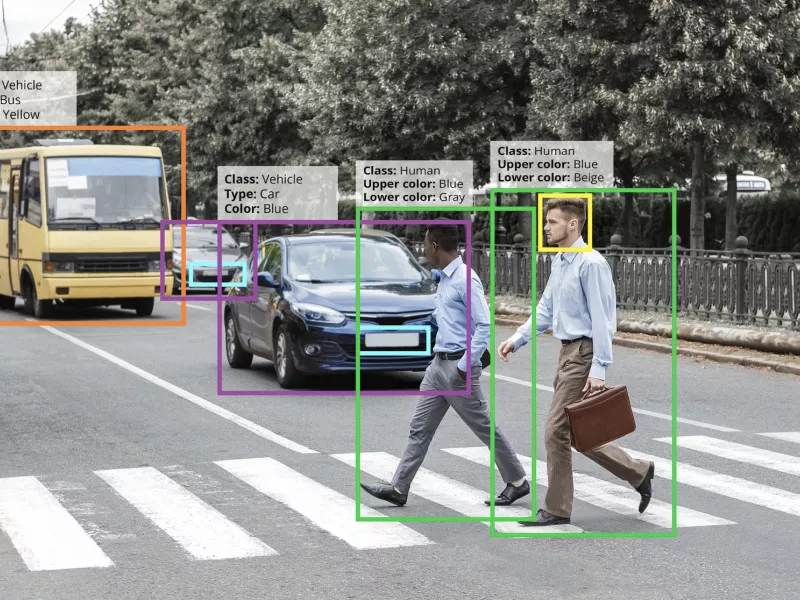

Track multiple objects

Axis devices can track multiple objects simultaneously, providing positions with persistent object IDs to ensure reliable tracking over time. You can use AI-based analytics with object detection and classification to filter metadata and trigger events. This enables real-time tracking, immediate alerts and automated responses.

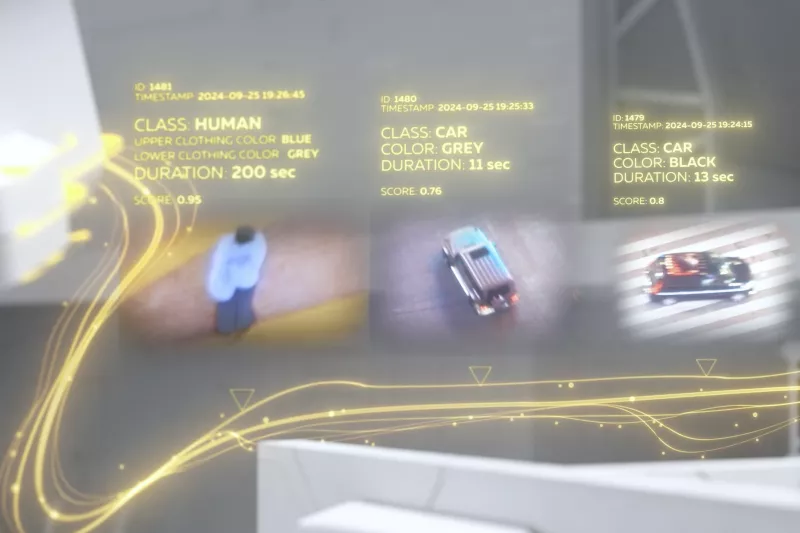

Get a snapshot from each scene

The snapshot feature makes reviewing footage from multiple cameras easy by automatically identifying and sending a cropped image of each detected object. You can quickly scan, store, or forward snapshots for further analysis, reducing the time, bandwidth, and costs associated with managing raw video.

Insights from multiple sensors

Access real-time metadata from video streams and other sensors. You can choose to retrieve data live or access it later via VMS integrations. All metadata is time-synced and aligned with the source content for seamless event correlation. Time-synchronization enables efficient indexing, search, and multi-sourced data.

From detection to integration

Supports multiple objects and attributes

AXIS Scene Metadata includes:

- Object types such as humans and vehicles

- Attributes like hats and bags

- Identification of vehicle type and color

- Movement data including position, direction, speed*, geo-coordinates* and timestamps.

For a full list of supported objects, attributes, and more, please see the developer documentation.

Note: *Requires radar or radar-video fusion camera integration.

Choose your metadata consumption

AXIS Scene Metadata is available either frame by frame or as consolidated summaries. Both formats include object classification, position, direction, speed, attributes and timestamps.

- Frame-by-frame: Ideal for real-time applications that require high temporal precision, like queue monitoring, traffic management, or loitering detection.

- Consolidated metadata: Simplifies post-event workflows by summarizing each object’s presence from entry to exit. Great for forensic search and statistical analysis, without the overhead of processing every frame.

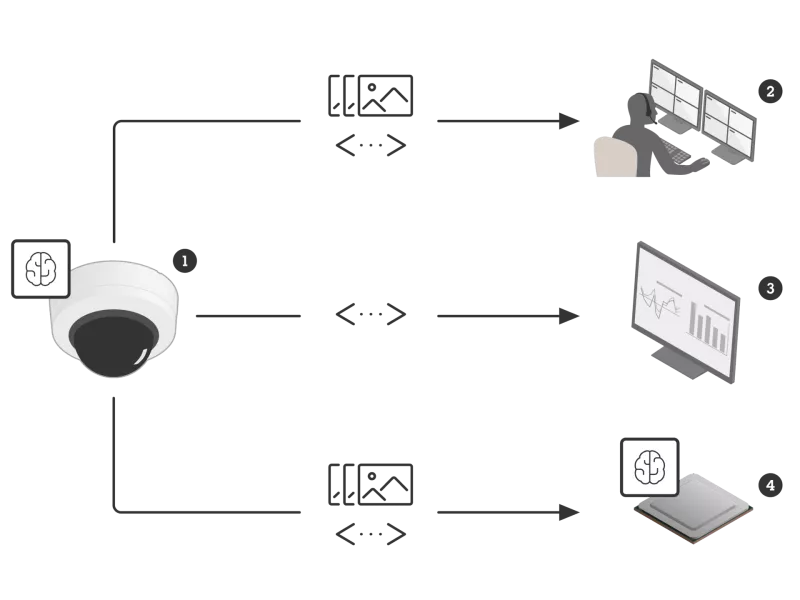

Build your own solution

AXIS Scene Metadata is designed for efficient use across diverse systems and applications:

1. Edge applications – enabling fast, localized decisions and automation

2. Video Management Systems – supporting real-time alerts and smarter post-event search

3. IoT and BI platforms – feeding dashboards with clear data on patterns and trends

4. A second analytics layer – powering deeper analysis in addition to initial edge processing